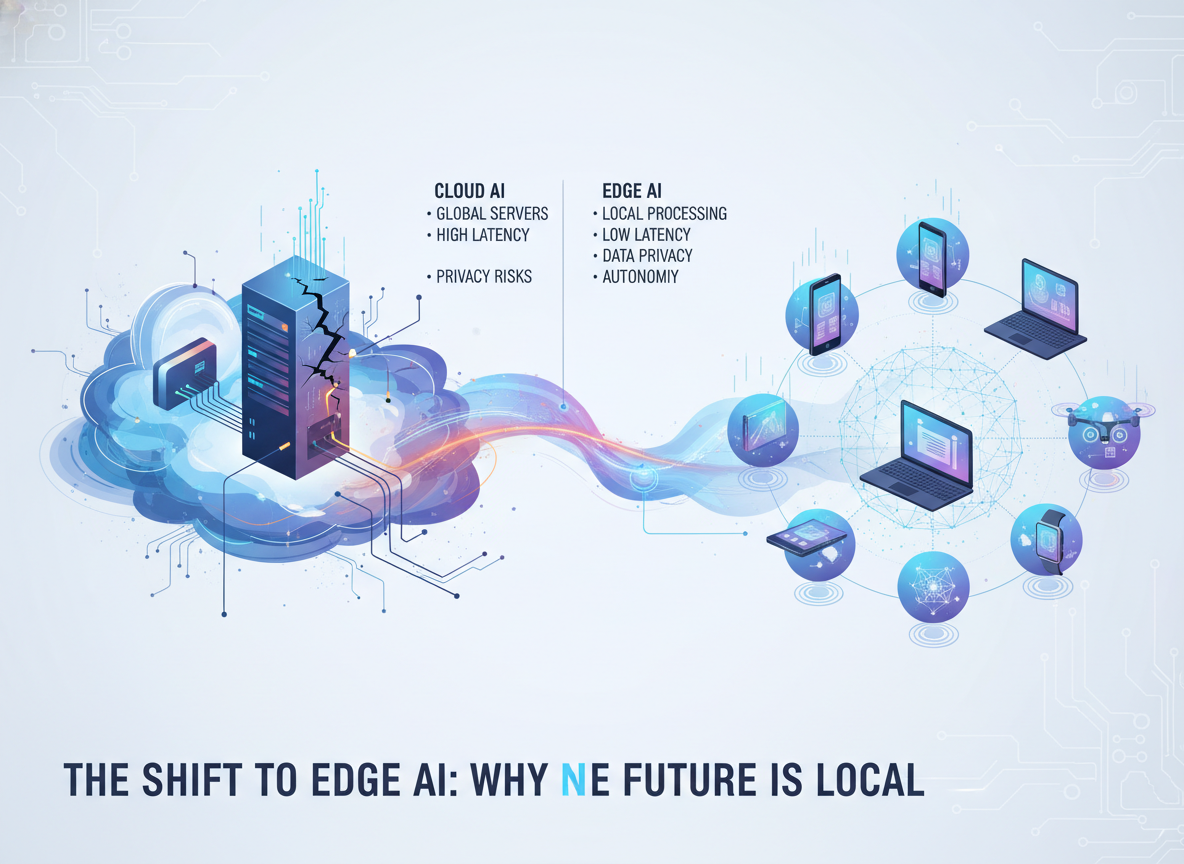

For years, AI has been synonymous with the cloud. However, a new trend toward 'Edge AI' is emerging, driven by the need for privacy and lower latency. Companies like Apple and Qualcomm are leading this charge by integrating dedicated Neural Processing Units (NPUs) directly into consumer chipsets.

Running models locally means that sensitive data never leaves the user's device. This is particularly vital for healthcare applications and personal assistants that handle private documents. Furthermore, local processing eliminates the 'round-trip' time to a server, making interactions with AI feel instantaneous and more integrated into the OS.

Challenges remain, primarily concerning battery life and the limited memory of mobile devices. To combat this, researchers are developing techniques like quantization and pruning to shrink large models without losing significant accuracy. The goal is to bring GPT-class intelligence to your pocket without requiring an internet connection.